These unique identifiers allow driverless web scrapers to differentiate web elements and pull out only the relevant information from the source code.Īs a user interacts with the website, however, new elements are formed and existing ones are altered by Javascript. While a detailed discussion of HTML and CSS is beyond the scope of this article, all we need to know is that HTML tags and CSS selectors format web elements, and the combination of the two gives each web element its own unique identifier. This source code is primarily written in HTML and CSS, with the former responsible for the website’s structure and the latter the website’s style. When you first open a website, the content you see comes from its source code, which you can view in Google Chrome at any time by pressing Ctrl+Shift+I. This is due to the peculiar manner that HTML, CSS, and Javascript cooperate to build modern web pages. While they are able to download a website’s source code as an HTML document, they cannot access any data that results from user interaction. These “driver-less” packages, which include Rvest in R and beautiful soup in Python, cannot execute Javascript in a browser and thus cannot access any Javascript-rendered elements.

While web scraping can be performed without a webdriver like Selenium, the capabilities of such tools are limited. The second reason for driving a web browser inside of a programming environment is web scraping, which is the process of extracting content from web pages to use in your own projects or applications. Thus, automated testing allows companies to increase customer satisfaction and avoid bugs. Then, when Apple rolls out another big update, it can rerun a saved testing protocol instead of devising a new one, called regression testing. With browser automation, use cases can be tested thousands of times in different environments, thus pulling out bugs that only occur under unusual circumstances. While manual testing remains an integral component of a testing protocol, it is impractical to test so many complex functionalities and their interactions entirely manually. However, bugs can be avoided by thorough testing, which is where browser automation comes in. Not only must each new component be tested, but its interactions with the rest of the phone must also be checked. Without web testing, programmers at companies like Apple would be unable to check whether new features work as expected before they go live, which could lead to unfortunate bugs for users, (like those that occurred in the iOS 12 update.) While customers are usually shocked when a company such as Apple releases buggy software, the sheer complexity of an iPhone and the number of new or updated features with each update (nearly 100 for iOS 12) make at least some mishaps extremely likely. Why use Selenium to automate web browsers? As mentioned above, the two main reasons are web testing and data scraping. Get ready to enter the world of browser automation, where your tedious tasks will be delegated to a daemon, and your prefrontal faculties will be freed to focus on philosophy. Lastly, an introduction to programming in Docker and a step-by-step protocol for setting up Selenium and binding it to RStudio is given. Selenium dependencies can be downloaded in a Docker container running on a Linux Virtual Machine thus, these technologies are introduced and discussed.

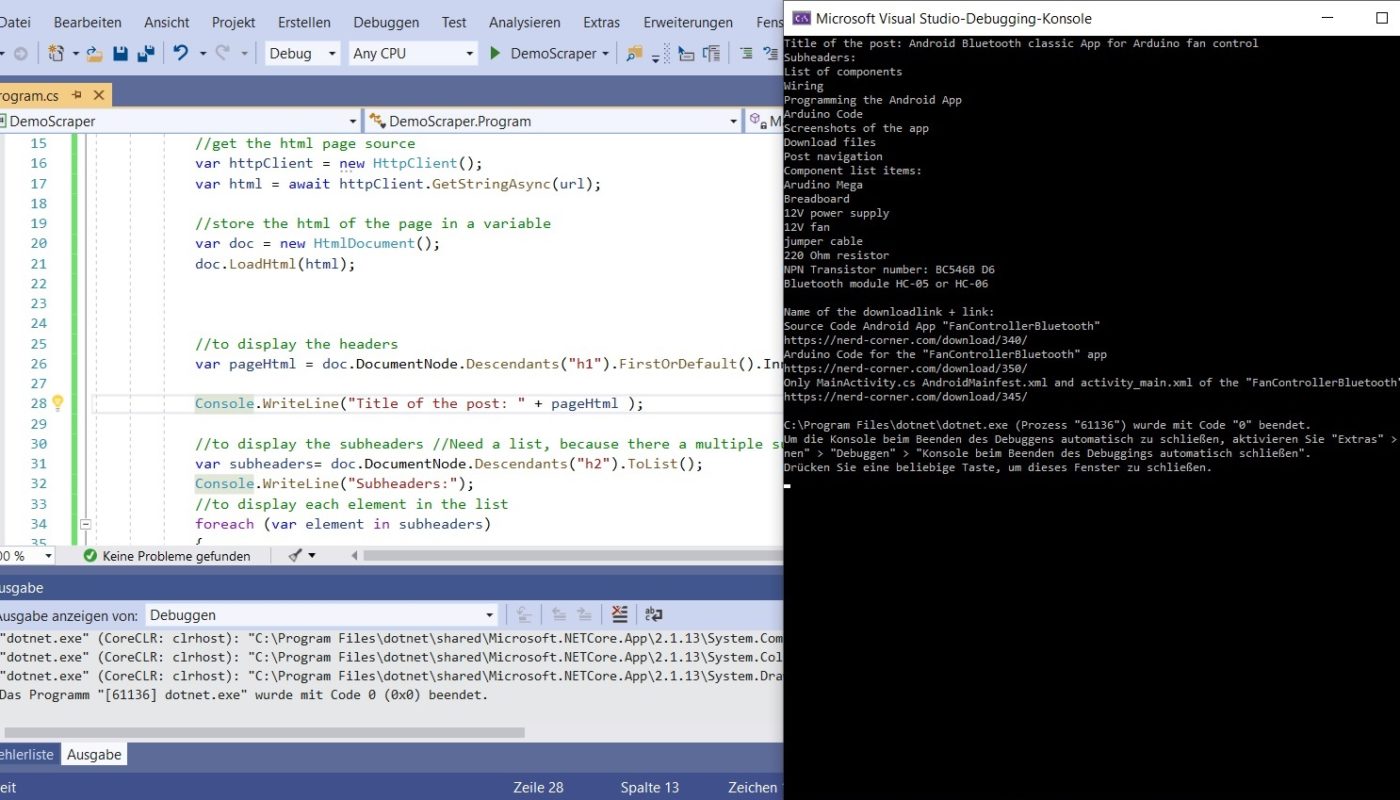

Selenium is the premier tool for testing and scraping Javascript-rendered web pages, and this tutorial will cover everything you need to set up and use it on any operating system. If it's of any use, I'm using Python 3.4.How to Use Selenium and Docker to Scrape and Test Websitesĭo you want to access vast amounts of data and test your website without lifting a finger? Do you have a mountain of online grunt work that a computer program could handle? Then you need browser automation with the Selenium WebDriver. Now I've gotten to the point where I'm trying to download just one PDF and a PDF does get downloaded, but it's a 0KB file. Originally, I had gotten all of the links to the PDFs, but did not know how to download them the code for that is now commented out. # if suffix in str(link): # If the link ends in. #for link in soup.find_all('a'): # Finds all links Response = requests.get(url, stream=True) Url = input("Enter the URL you want to scrape from: ") Here is what I have so far: import requests None of the questions on StackOverflow seem to be helping me either. I've looked at several tutorials, but I'm not entirely sure how to go about doing this. I want to enter a url, and then get the PDFs and save them in a directory in my laptop. Essentially, I'm trying to scrape all of the lecture notes from one of my courses, which are in the form of PDFs. I'm working on making a PDF Web Scraper in Python.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed